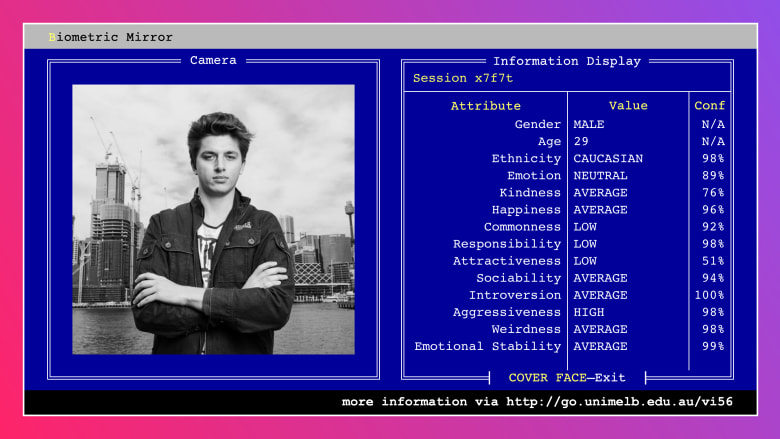

This algorithm says I'm aggressive, irresponsible and unattractive. But should

Source: Ben Grubb Ben Grubb

On July 26 at 3.31pm I received my results for the equivalent of a psychometric test, only they were based not on hundreds of questions to determine my traits but simply a photograph of yours truly.

On July 26 at 3.31pm I received my results for the equivalent of a psychometric test, only they were based not on hundreds of questions to determine my traits but simply a photograph of yours truly.

The picture, taken in May 2015 by Fairfax Media photographer James Brickwood, was fed into an algorithm developed by academic Dr Niels Wouters, from the University of Melbourne, built to analyse and judge people to predict their characteristics (the lowlights and highlights).

Was I young, dumb and broke too? Dr Wouters, you've built evil, I thought, shut it down!

It's the same technology some governments and law-enforcement agencies use around the world to determine whether you might be a potential terrorist, psychopath or murderer.

According to the algorithm, I looked male (correct), 29 years old (incorrect; I was 24 at the time) and caucasian (correct).

But far worse, the algorithm said — with a confidence level of 98 per cent — that my "aggressiveness" level was "high" and my responsibility and attractiveness levels were "low" (with 98 per cent and 51 per cent confidence respectively).

The results hit me hard. As a 27-year-old technology commentator and journalist looking back at a photograph taken only three years ago, how dare this unintelligent algorithm diagnose me as being unattractive, irresponsible, and 29!

Was I young, dumb and broke too? Dr Wouters, you've built evil, I thought, shut it down! This story was never seeing the light of day, I was sure.

But after going to a pub to drown my sorrows, I decided that a chat over the phone with Dr Wouters was the most appropriate course of action. What had I done to deserve this judgment, I wanted to ask him. But not before subjecting him to the same test (the algorithm said he was 36 and happy with an "average" level of attractiveness). Damn him!

I also wanted to provide him with a new photograph to re-scan.

Suddenly, I was attractive and 24 in the new photo. Then, in the one with my glasses on, I was 28.

The algorithm was clearly flawed. And that's the point Dr Wouters is demonstrating.

"Our study aims to provoke challenging questions about the boundaries of artificial intelligence (AI)," Dr Wouters says. "It shows users how easy it is to implement AI that discriminates in unethical or problematic ways which could have societal consequences. By encouraging debate on privacy and mass-surveillance, we hope to contribute to a better understanding of the ethics behind AI.".

Called Biometric Mirror, Dr Wouters' computer algorithm, which is on display at the University of Melbourne, detects a range of facial characteristics in seconds. It then compares the data to that of thousands of other faces evaluated for their psychometrics by a group of crowd-sourced responders.

Dr Wouters points to instances where governments and law-enforcement agencies have begun to use AI that have real-world consequences for peoples' rights, literally judging them by their "look" based on algorithmic bias and assumptions.

Dr Wouters' research project was built to explore concerns about consent, data storage and algorithmic bias.

“With the rise of AI and big data, government and businesses will increasingly use CCTV cameras and interactive advertising to detect emotions, age, gender and demographics of people passing by,” Dr Wouters says, pointing to examples like Westfield, which uses it to estimate the age, gender and mood of shoppers in its malls.

“The use of AI is a slippery slope that extends beyond the realm of shopping and advertising," Dr Wouters says. "Imagine having no control over an algorithm that wrongfully considers you unfit for management positions, ineligible for university degrees, or shares your photo publicly without your consent. One of your traits is chosen – say, your level of responsibility – and Biometric Mirror asks you to imagine this information is now being shared with your insurer or future employer.

“This project is a transparent demonstration of the potential consequences for individuals. Biometric Mirror only calculates the estimated public perception towards facial appearance.

"It is limited by its inaccuracy, because only a relatively small and crowd-sourced dataset informed its design. It is inappropriate to draw meaningful conclusions about psychological states from Biometric Mirror.”

Dr Wouters adds it is important to note that Biometric Mirror is not a tool for psychological analysis.

If that's the case, it might be time for a re-think of the technology's use.

| }

|