Machine learning at the speed of light: New paper demonstrates use of photonic s

Source: Maggie Pavlick

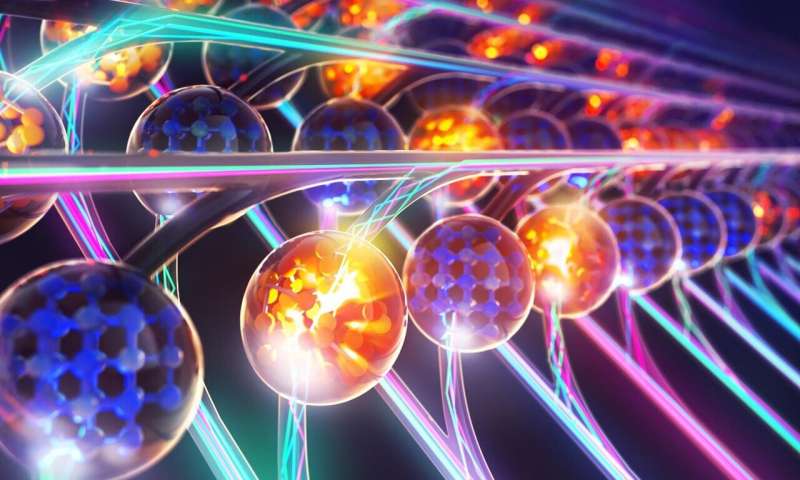

Illustration showing parallel convolutional processing using an integrated phonetic tensor core. New research published this week in the journal Nature examines the potential of photonic processors for artificial intelligence applications. Credit: XVIVO

Illustration showing parallel convolutional processing using an integrated phonetic tensor core. New research published this week in the journal Nature examines the potential of photonic processors for artificial intelligence applications. Credit: XVIVO

As we enter the next chapter of the digital age, data traffic continues to grow exponentially. To further enhance artificial intelligence and machine learning, computers will need the ability to process vast amounts of data as quickly and as efficiently as possible.

Conventional computing methods are not up to the task, but in looking for a solution, researchers have seen the light—literally.

Light-based processors, called photonic processors, enable computers to complete complex calculations at incredible speeds. New research published this week in the journal Nature examines the potential of photonic processors for artificial intelligence applications. The results demonstrate for the first time that these devices can process information rapidly and in parallel, something that today's electronic chips cannot do.

"Neural networks 'learn' by taking in huge sets of data and recognizing patterns through a series of algorithms," explained Nathan Youngblood, assistant professor of electrical and computer engineering at the University of Pittsburgh Swanson School of Engineering and co-lead author. "This new processor would allow it to run multiple calculations at the same time, using different optical wavelengths for each calculation. The challenge we wanted to address is integration: How can we do computations using light in a way that's scalable and efficient?"

The fast, efficient processing the researchers sought is ideal for applications like self-driving vehicles, which need to process the data they sense from multiple inputs as quickly as possible. Photonic processors can also support applications in cloud computing, medical imaging, and more.

"Light-based processors for speeding up tasks in the field of machine learning enable complex mathematical tasks to be processed at high speeds and throughputs," said senior co-author Wolfram Pernice at the University of Münster. "This is much faster than conventional chips which rely on electronic data transfer, such as graphic cards or specialised hardware like TPUs (Tensor Processing Unit)."

The research was conducted by an international team of researchers, including Pitt, the University of Münster in Germany, the Universities of Oxford and Exeter in England, the École Polytechnique Fédérale (EPFL) in Lausanne, Switzerland, and the IBM Research Laboratory in Zurich.

Schematic representation of a processor for matrix multiplications that runs on light. Credit: University of Oxford

The researchers combined phase-change materials—the storage material used, for example, on DVDs—and photonic structures to store data in a nonvolatile manner without requiring a continual energy supply. This study is also the first to combine these optical memory cells with a chip-based frequency comb as a light source, which is what allowed them to calculate on 16 different wavelengths simultaneously.

In the paper, the researchers used the technology to create a convolutional neural network that would recognize handwritten numbers. They found that the method granted never-before-seen data rates and computing densities.

"The convolutional operation between input data and one or more filters—which can be a highlighting of edges in a photo, for example—can be transferred very well to our matrix architecture," said Johannes Feldmann, graduate student at the University of Münster and lead author of the study. "Exploiting light for signal transference enables the processor to perform parallel data processing through wavelength multiplexing, which leads to a higher computing density and many matrix multiplications being carried out in just one timestep. In contrast to traditional electronics, which usually work in the low GHz range, optical modulation speeds can be achieved with speeds up to the 50 to 100 GHz range."

The paper, "Parallel convolution processing using an integrated photonic tensor core," was published in Nature and coauthored by Johannes Feldmann, Nathan Youngblood, Maxim Karpov, Helge Gehring, Xuan Li, Maik Stappers, Manuel Le Gallo, Xin Fu, Anton Lukashchuk, Arslan Raja, Junqiu Liu, David Wright, Abu Sebastian, Tobias Kippenberg, Wolfram Pernice, and Harish Bhaskaran.

| }

|